My Role and Responsibilities

- Led the agent-assisted developer journey across three lifecycle phases

- Led cross-functional workshops and developer interviews

- Led end-to-end design experience design for data integration

- Led design of the Agent Orchestrator, a cross-platform, natural language collaborator spanning web console, browser extension, and IDE

- Vibe-coded UI explorations and interactive prototypes in Cursor and Amazon Kiro IDE

- Used Claude for exploratory research, technical SME, and spec authoring

- Established Developer Experience Outcomes (DXOs) as measurable success criteria per job-to-be-done

- Incorporated JTBD/DXOs into leadership presentations and requirements documents

The Situation

With the platform model defined and the design tenets established, the next step was mapping how a developer actually moves through the experience. This was completed after conducting cross-functional workshops, developer interviews, competitive benchmarking, and defining jobs-to-be-done across the lifecycle.

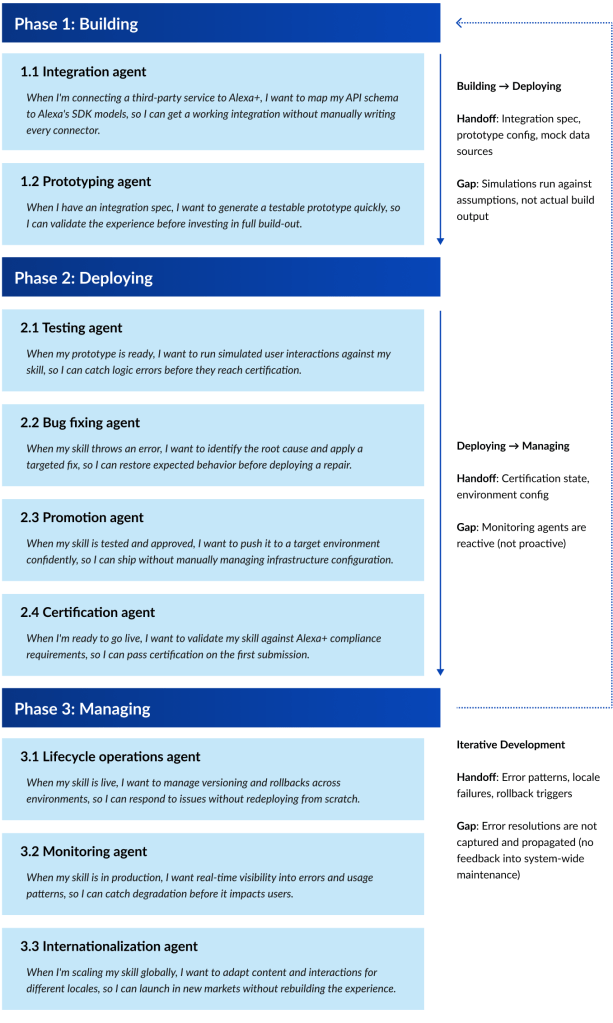

The agent-forward journey spans three phases (building, deploying, and managing), each supported by a distinct set of agents scoped to the jobs a developer needs to accomplish at that stage. The diagram also surfaces the handoffs and gaps between phases: where context transfers cleanly, and where the current system potentially falls short.

My Outcomes

Agent-Assisted Developer Journey Map

This diagram organizes the full developer lifecycle into phases that contain agents to assist in job completion. Each agent is framed as a job-to-be-done, expressed in the developers’ own words.

Integration Experience Definition

Integration is the first step of the developer journey. Based on the integration path, the agent performs different tasks, and developers have different decisions and tradeoffs to consider.

- API Integration: “I have a service. I want Alexa+ to call it.” The developer submits an API URL and supporting assets. The agent detects the service, routes capabilities, and generates the integration.

- MCP (Model Context Protocol): “I have capabilities. I want Alexa+ to discover them.” The developer submits a tool manifest. The agent reads it, infers what the tools do, and surfaces a structured summary for developer confirmation.

- Agent Relay: “I have an agent. I want Alexa+ to hand off to it.” The developer defines scope boundaries (hand-off and hand-back conditions). The agent infers scope boundaries as a starting point. The developer must approve or negotiate direction before proceeding.

This flowchart illustrates these three integration paths in parallel, and shows where the experience converges again in the build phase. This visual asset helped parallel technical teams understand their impact during the integration experience. It also highlighted design complexities between them, which helped manage scope expectations.

Agent Orchestrator

Alexa+ development was powered by numerous underlying sub-agents, but there was no unified client-side presence that made them feel like a cohesive system. Developers were context-switching across disconnected tools with no single source of truth.

I designed the Agent Orchestrator as that unified presence: a natural language collaborator instantiated across the web console, browser extension, and IDE, meeting developers where they already worked.

The core design problem was making a complex multi-agent system feel like one trusted collaborator rather than a collection of tools. Key decisions included:

- Advocated for persistent context carry-over across platforms and roles, so handoffs didn’t require developers to re-explain the situation

- Pushed for natural language UI to support builders across the full technical spectrum

- Defined the orchestrator’s personality as an engineer with deep institutional knowledge, supportive and educational, but always oriented toward getting the job done

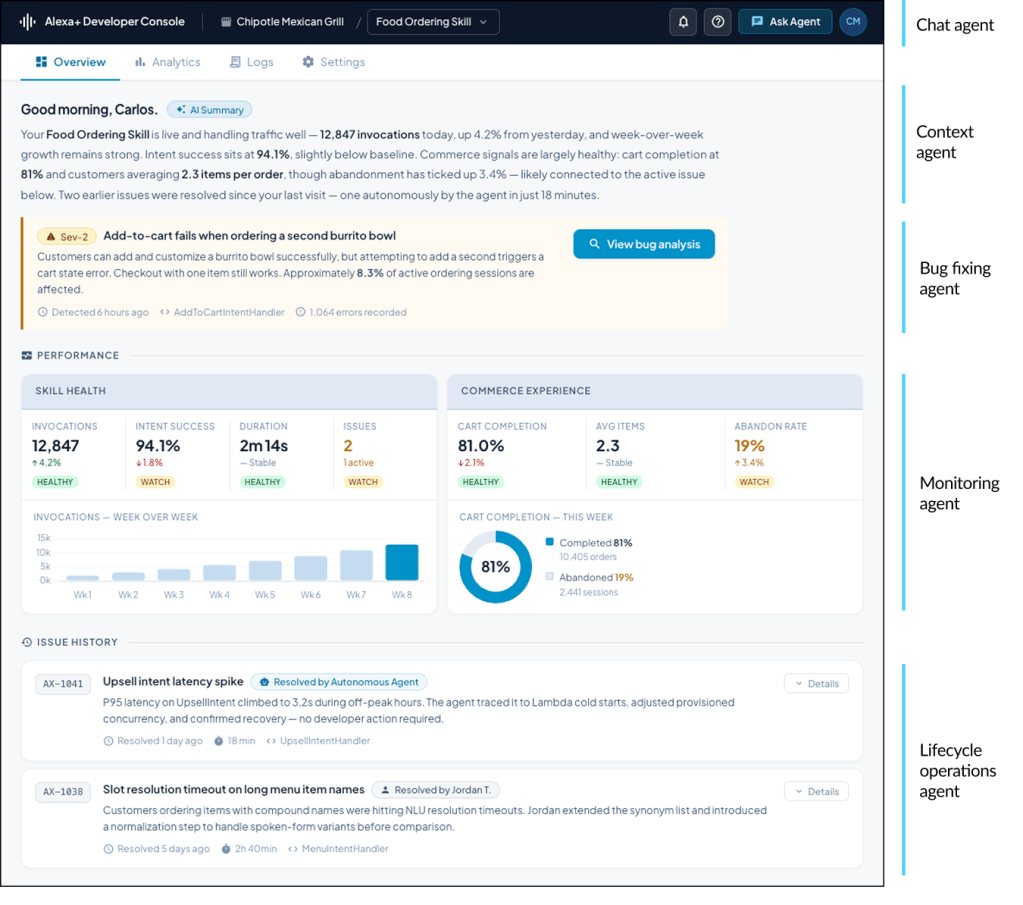

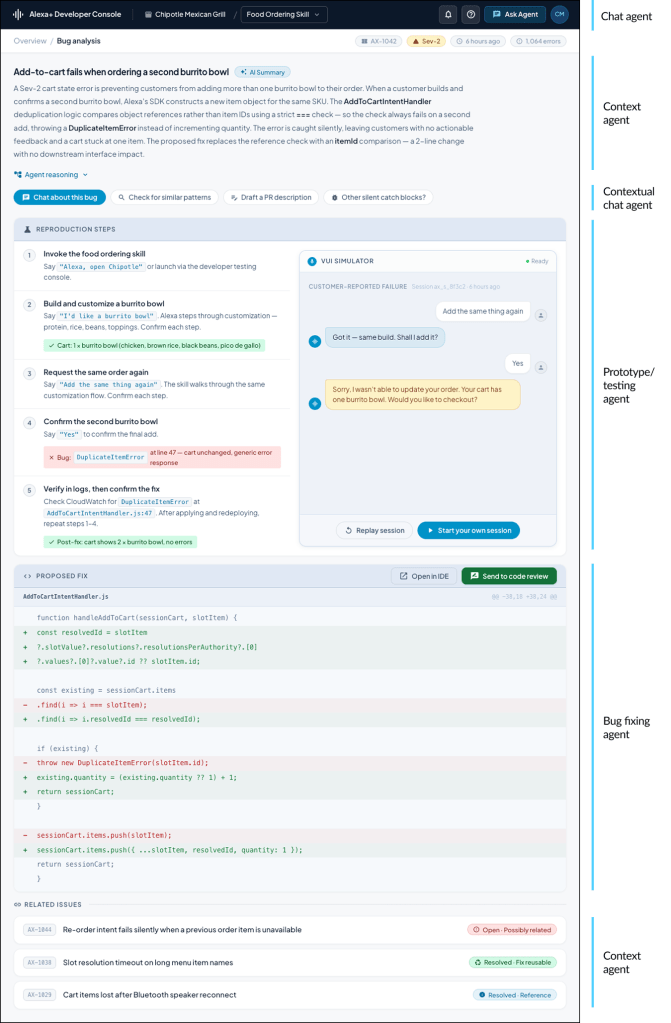

To illustrate the concept, I designed a north star experience following a production bug through its full lifecycle: detection on the web dashboard, investigation via browser extension, resolution in the IDE, and bidirectional ticket updates throughout, spanning a PM and engineer handoff.

UX demo available upon request

Early vibe-designed explorations

Daily dashboard – Source of truth

Bug analysis deep dive

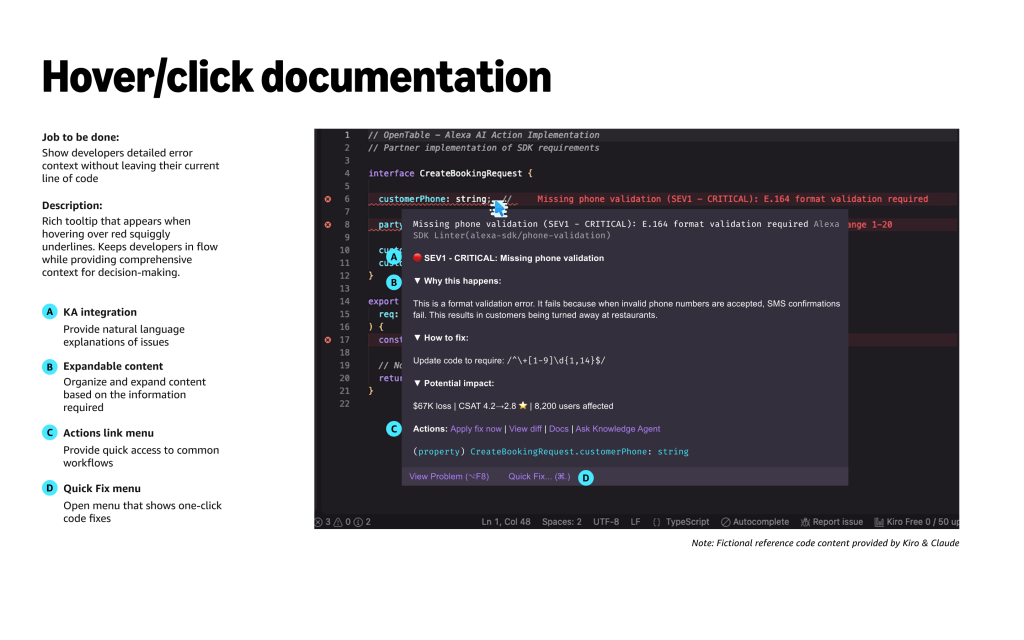

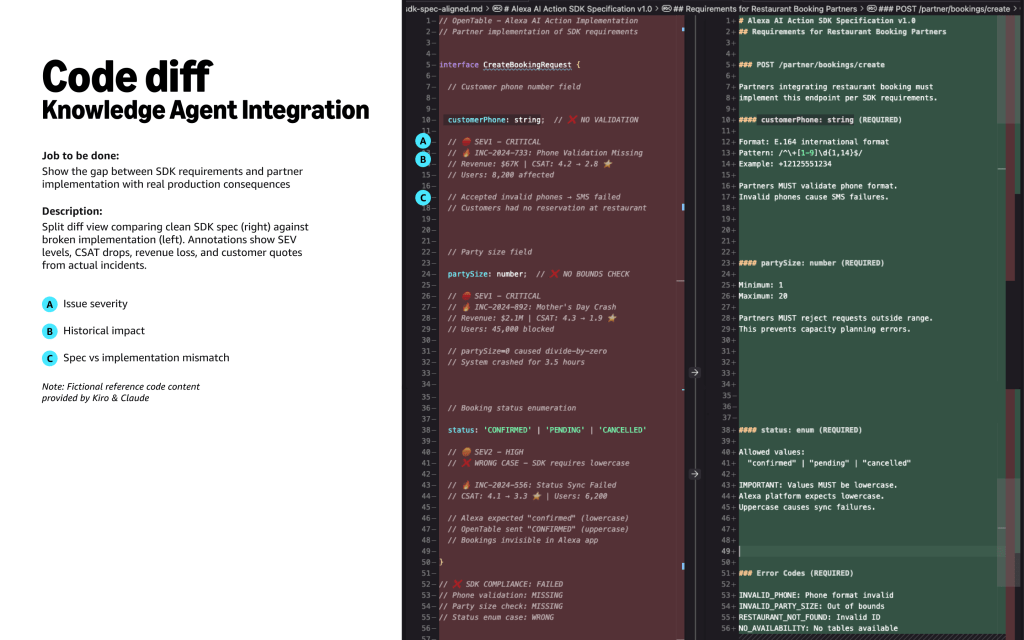

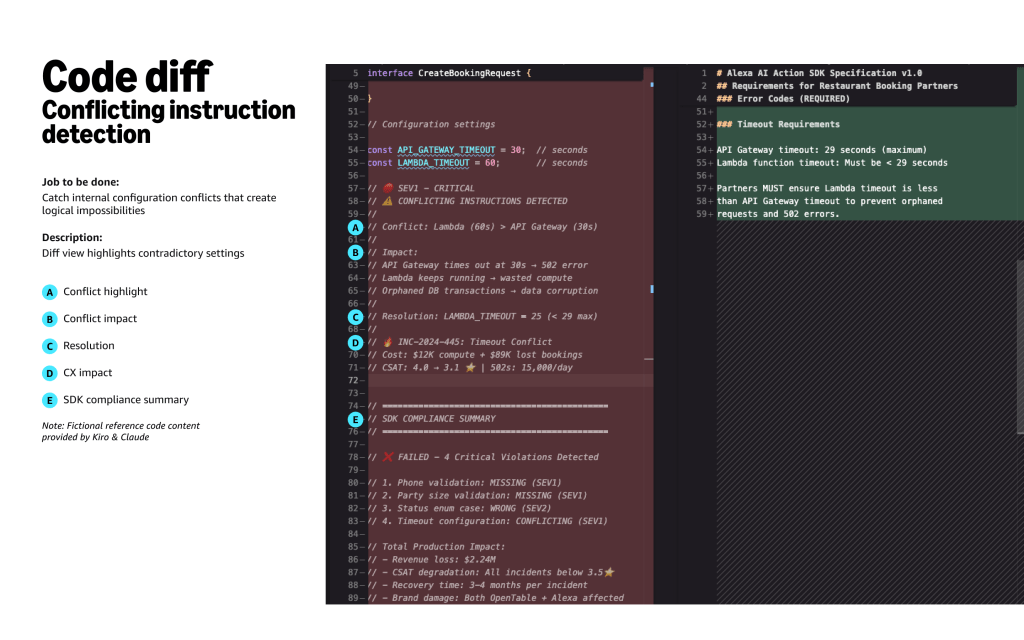

Linter – Kiro IDE plugin

My Approach

- Interviewed Alexa developers and solutions architects to understand where the journey created friction, confusion, or unnecessary overhead

- Collaborated closely with solutions architects and technical writers to ensure that technical requirements were reflected accurately in the task flows

- Attached measurable outcomes to each Job-to-be-Done, creating Developer Experience Outcomes (DXOs) that gave the team a concrete definition of success at every stage

- Defined the distinct roles of agents, judges, and the orchestrator across the journey, establishing accountability for what the system handles autonomously and what requires human oversight

- Identified where AI could meaningfully reduce cognitive load through scaffolding and anticipation, and where human judgment needed to remain in the loop

Key Decisions

- Scoped initial design work to API and MCP integration paths, deferring Agent Relay. API and MCP integrations covered the majority of 3P use cases and could be validated independently, while Agent Relay required deeper cross-functional alignment before the experience could be responsibly defined.

- Partnered with solutions architect teams to define Agent Relay as a jointly owned workstream rather than a pure design deliverable, recognizing that scope boundaries and handoff logic required technical authorship

- Directly incorporated JTBD/Developer Experience Outcomes into leadership presentations, vision decks, and requirements documents to establish shared success criteria