My Role and Responsibilities

- Founding Lead AI UX Designer on a 0-to-1 rebuild of the Alexa+ Studio developer platform, transitioning from deterministic to probabilistic AI behavior

- Authored product design tenets governing human-AI interaction decisions

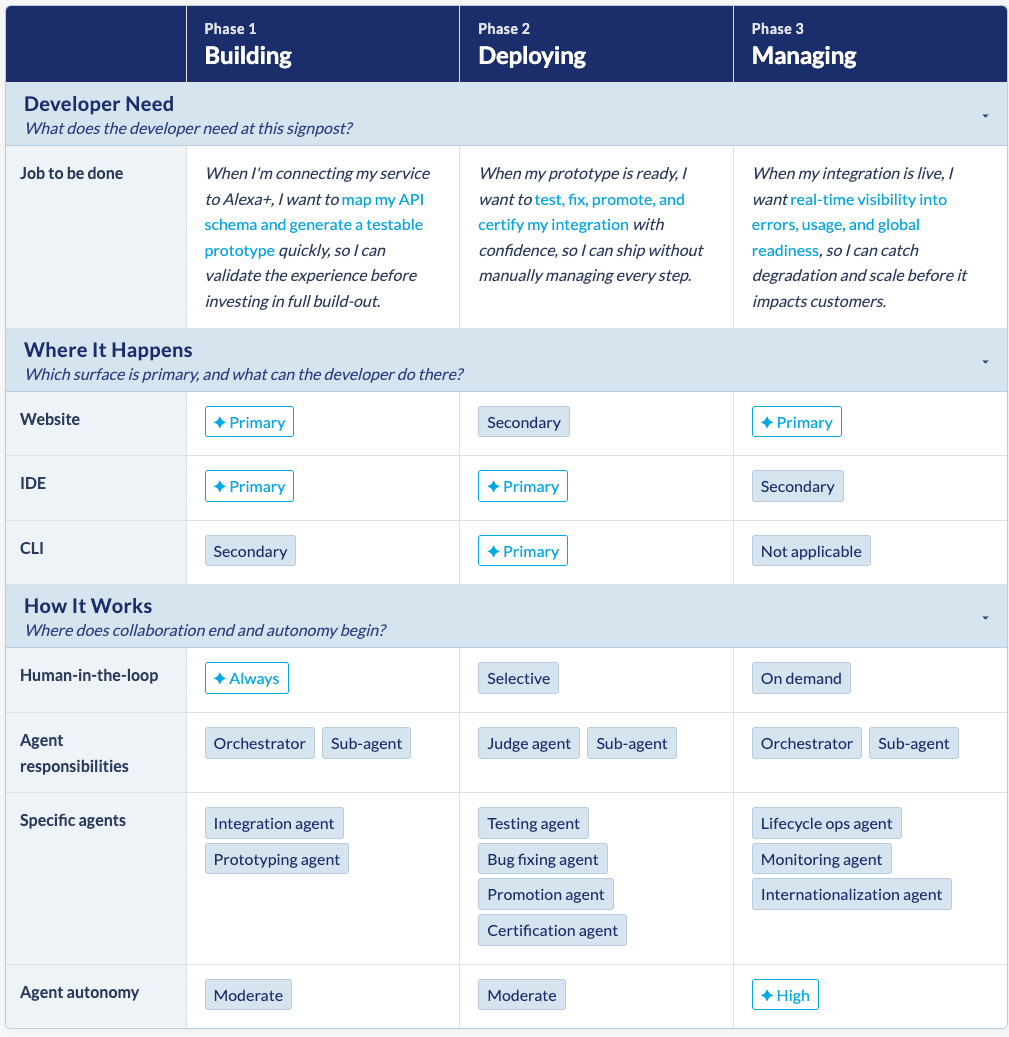

- Defined platform architecture across Build, Deploy, and Manage phases, mapping human vs. agent responsibilities

- Led primary and secondary research, translating findings into Jobs-to-be-Done and Developer Experience Outcomes

- Vibe-coded UI explorations and interactive prototypes in Cursor IDE and Amazon Kiro IDE

- Used Claude for technical SME and spec authoring to serve as the source of truth for prototyping

- Prioritized the product design roadmap across internal and third-party developer needs

The Situation

Amazon Alexa+ is an AI-powered personal assistant that gets things done. It was a significant pivot from deterministic to probabilistic customer experiences. This change required a complete 0-to-1 rebuild of the developer experience, with the following objectives:

- Self-service SDK integration experience

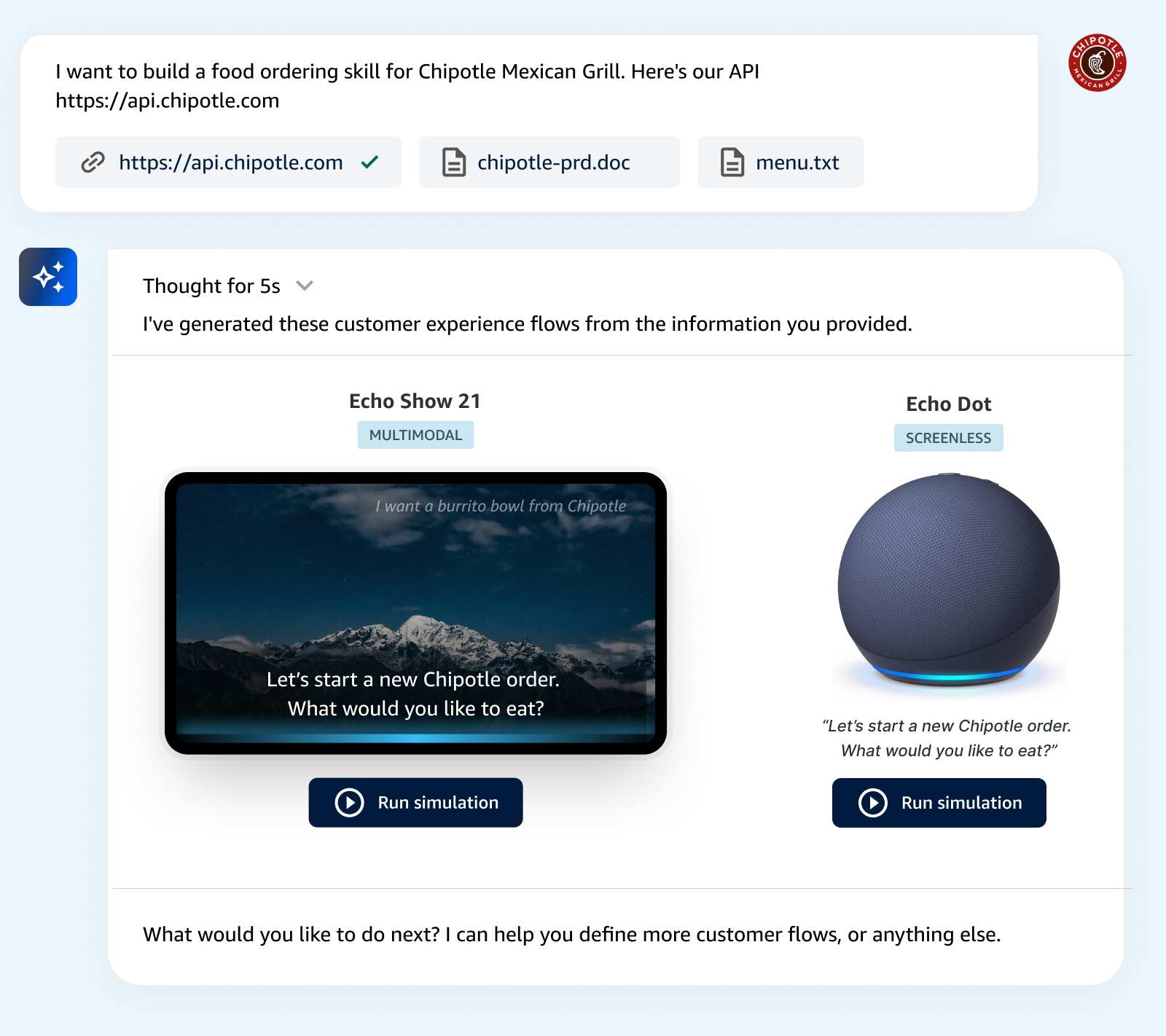

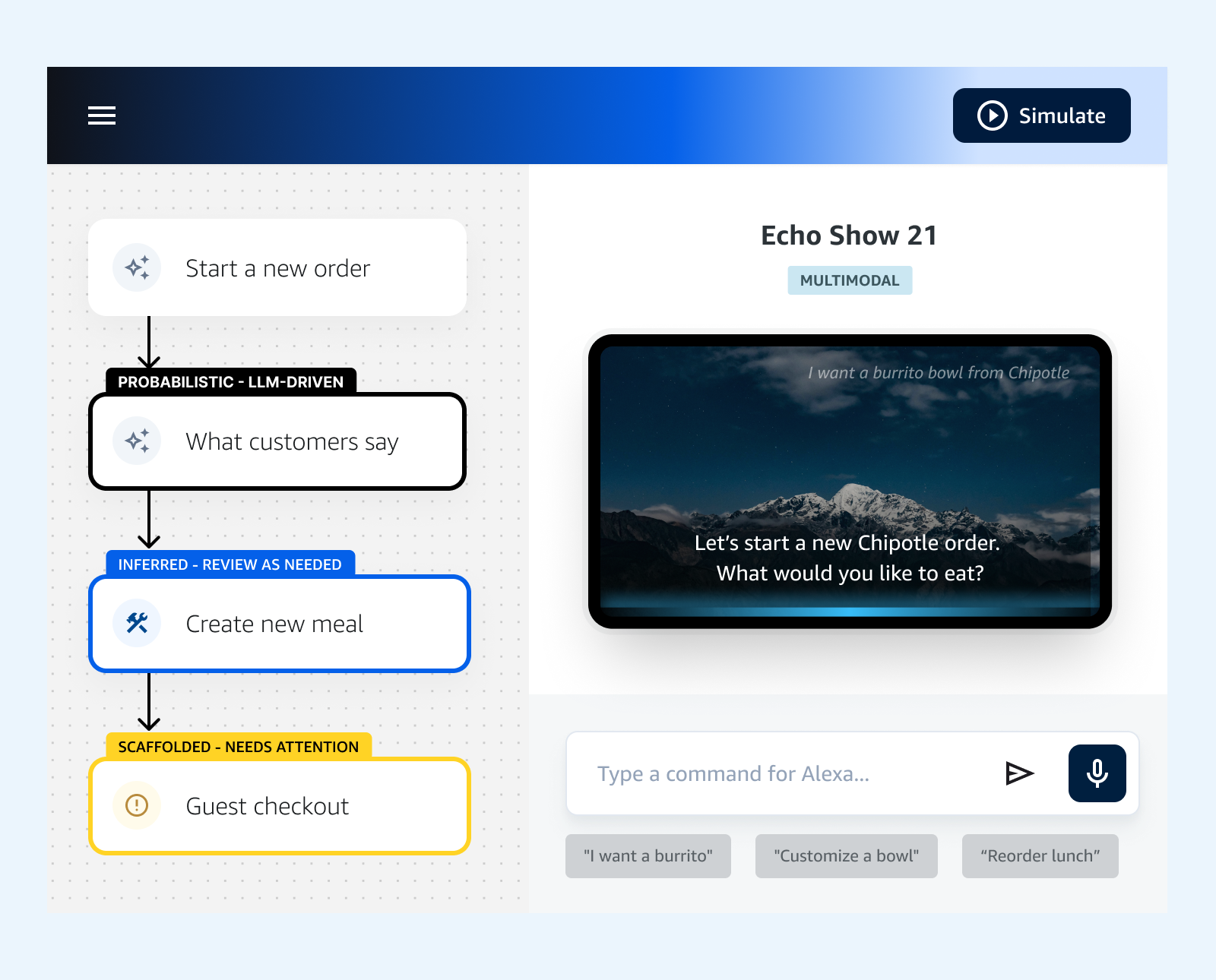

- Customer experience simulation and debugging

- Autonomous and directed testing

- Monitoring and anomaly detection

- End-to-end agentic inference and assistance

- Bi-directional updates of information and context

- Personalization & contextualization based on tasks, job roles, and permissions

As the Lead AI UX designer, I established design tenets and a platform overview of our developer experience. This defined the human-AI behavioral dynamics, agent instantiation, and primary objectives across the journey.

Product Design Tenets

I authored these design tenets to govern product design decisions for the Alexa+ developer experience. This was an important tool to help build consensus around human-AI experiences.

Close the gap between intent and outcome

Developers describe what they want and the system handles the translation. Every interaction starts from meaning, not syntax.

Treat AI as a collaborator

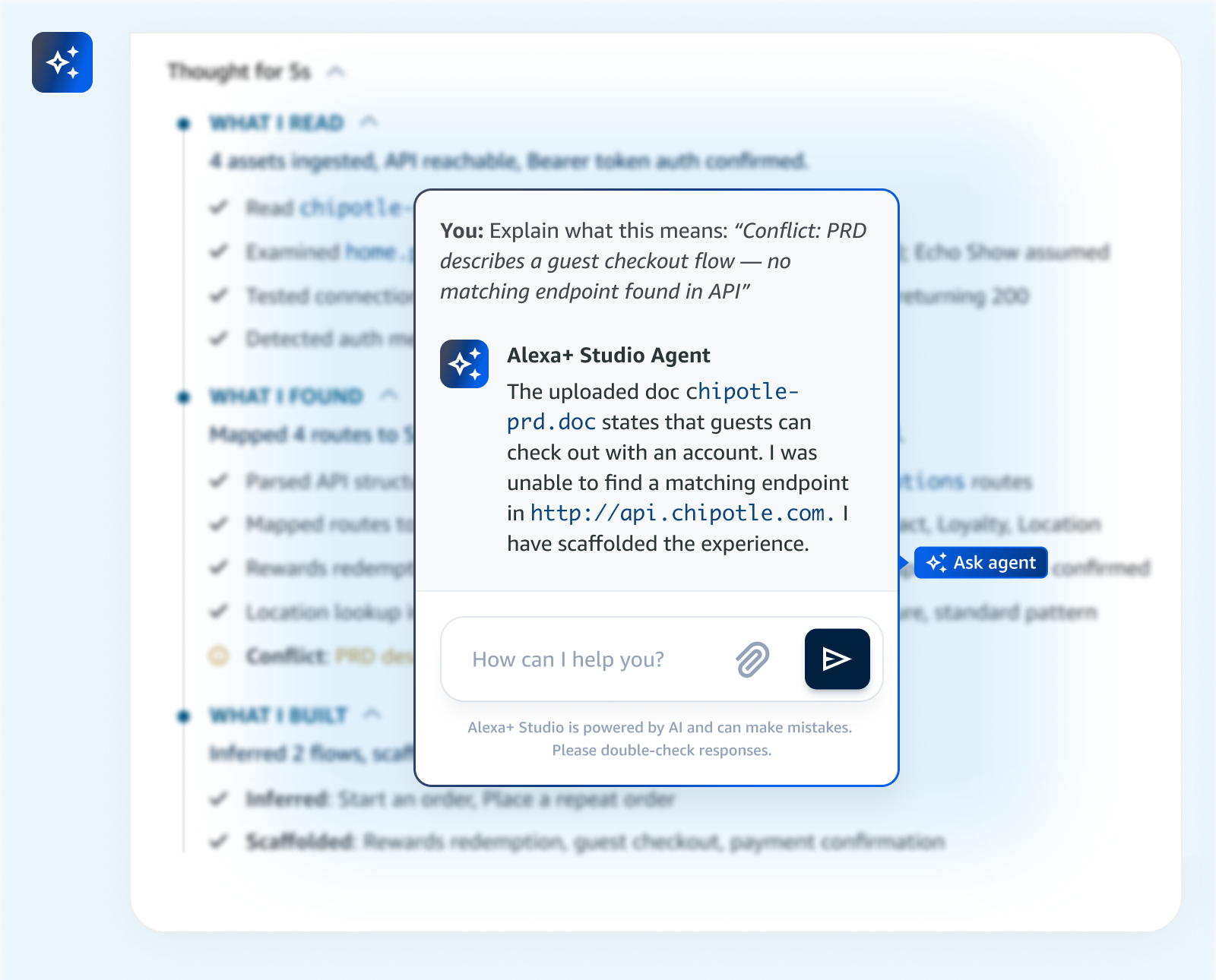

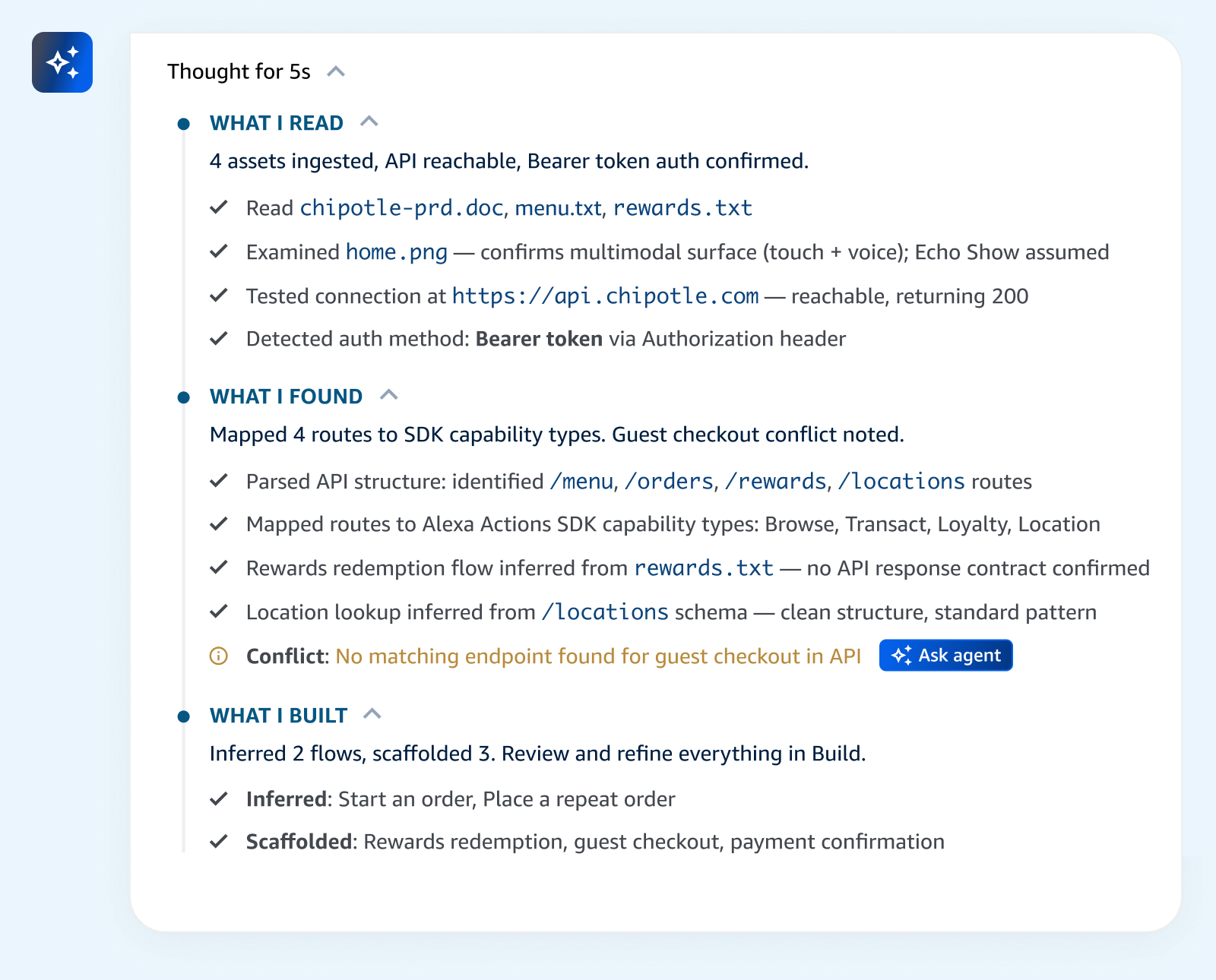

The system maintains context across rapidly shifting technical tasks. This reduces cognitive strain, and never surfaces a problem without an explanation and a path forward.

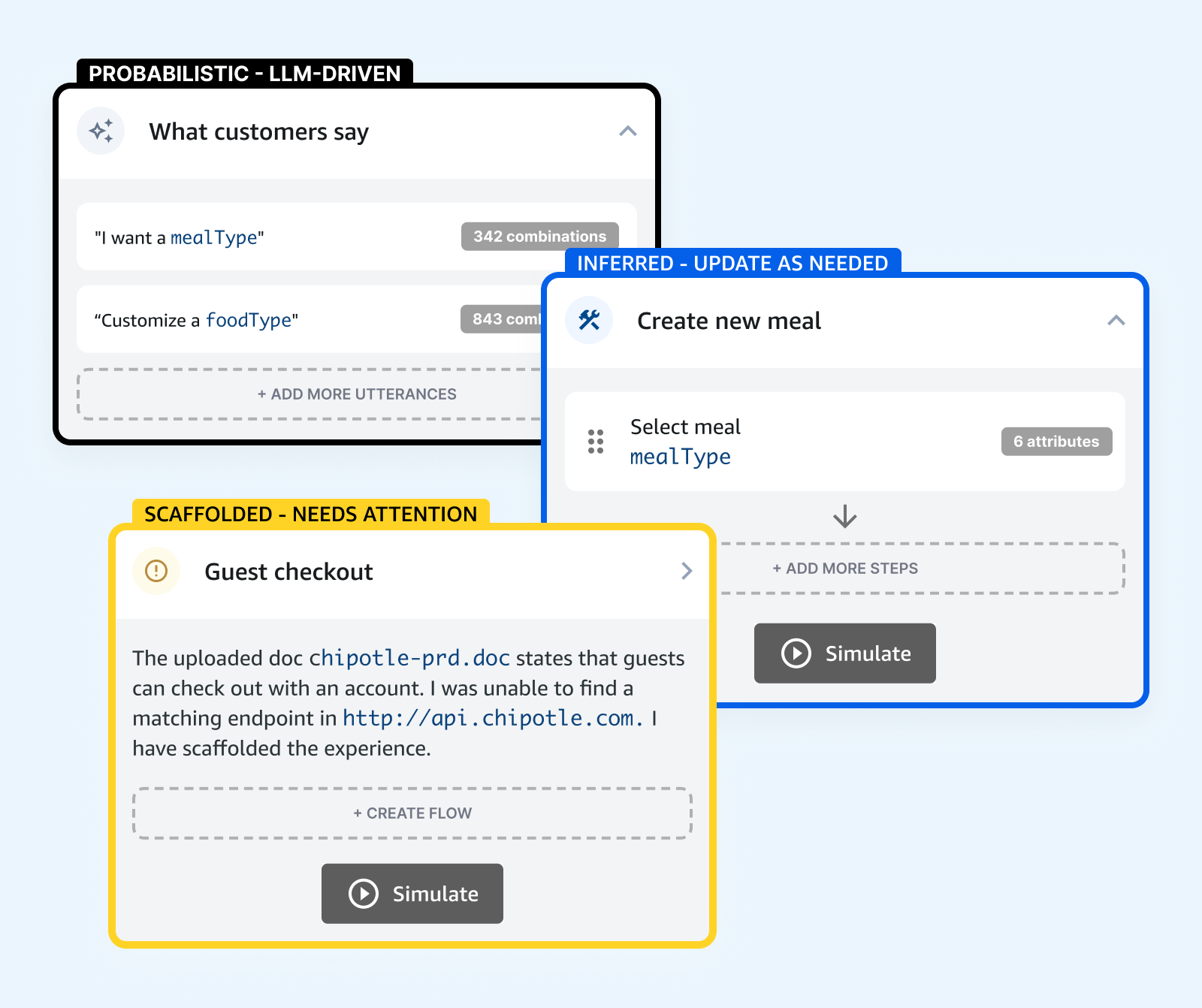

Every generated output is a starting point, not a conclusion

AI-generated results are surfaces for review and refinement, not final answers. The developer’s confirmation is what makes an inference authoritative.

Make intelligence inspectable

Every inferred value is accompanied by a signal note explaining its source and logic, so developers can evaluate the reasoning behind a result, not just the result itself.

Preserve momentum above all else

The system scaffolds and anticipates rather than blocks. Developers can work at the speed of innovation, not at the speed of remediation.

Platform overview

This overview provided a framework to scope product, design, and engineering initiatives. It focused on three distinct phases of the Alexa skill creation process: building, deploying, and managing. There are developer jobs-to-be-done and expected tools for each of these phases. I defined the balance of responsibilities between humans and agents, and where/how they best collaborate together. I also focused on behind-the-scenes agent orchestration and exploring the roles of judge agents to evaluate, validate, and challenge sub-agent outputs.

My Approach

- Grounded the work in both primary and secondary research, building a shared understanding of how developers build for Alexa

- Used design as an early research tool by sketching flows and vibe-coding/designing the UX

- Benchmarked against leading developer platforms to identify table stakes and areas of opportunity

- Translated research findings into Jobs-to-be-Done and Developer Experience Outcomes, giving the team a shared language for what success looks like at each stage of the journey

- Audited the legacy toolset to establish a baseline, identifying which workflows were candidates for agentic automation and which required rethinking from scratch

- Prioritized the product design roadmap against both urgent internal needs and the longer-term requirements of third-party developers

Key Decisions

- Prioritized first-party testing tools and the third-party SDK integration experiences first, because reducing operational load for internal teams and lowering technical friction for external developers were the two highest-leverage entry points into the broader DX rebuild.

- Chose a web-based agent orchestrator as the primary interaction surface to ensure third-party developers without deep Alexa expertise could complete complex tasks without switching contexts.