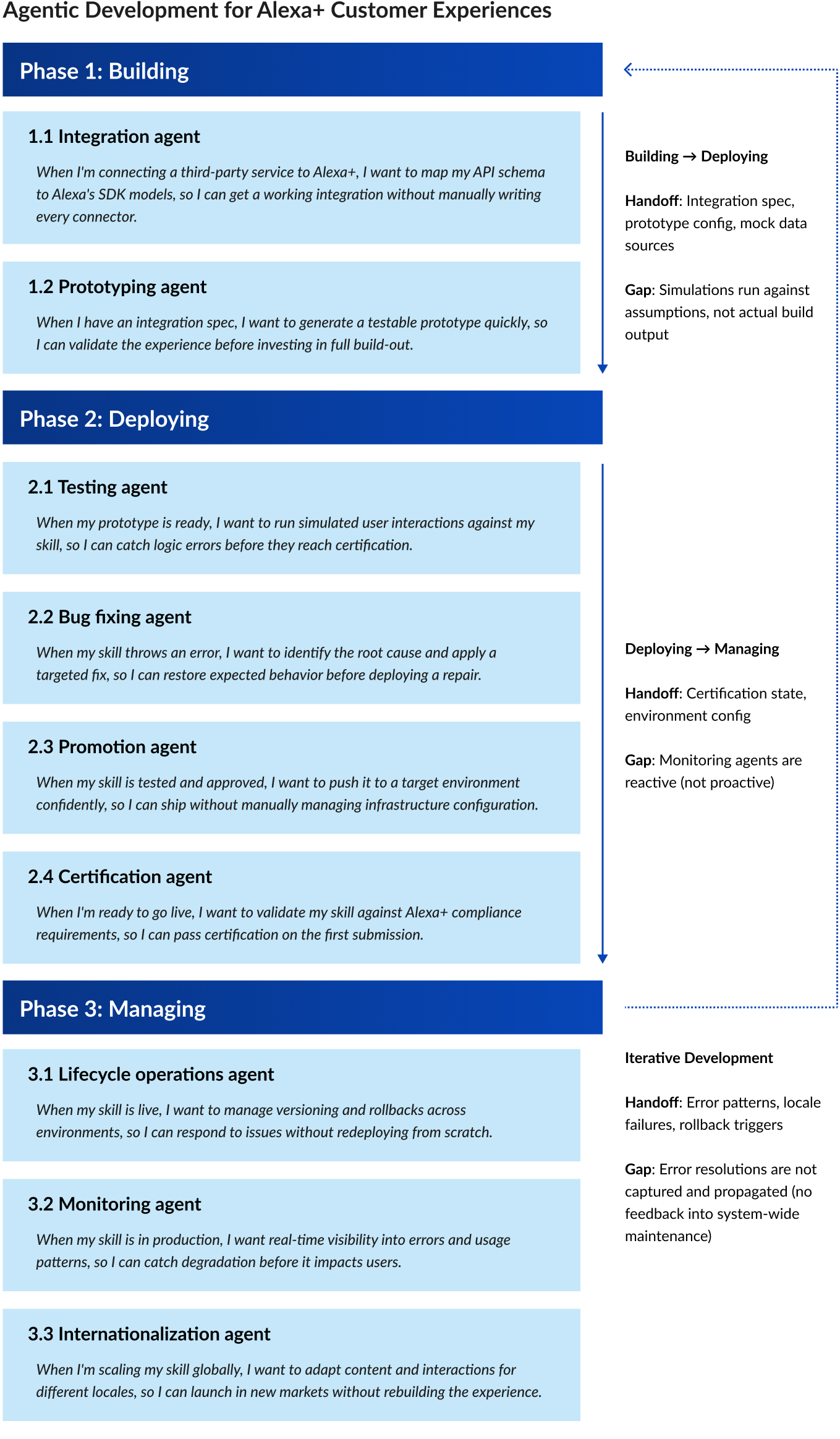

The Situation

When Alexa+ began scaling its agentic platform, the underlying investment was substantial: multiple specialized sub-agents handling integration, debugging, documentation, and code validation. But from a developer’s perspective, none of it felt like a system. Each agent had its own surface, its own interaction model, its own context. A PM investigating a production bug handed off to an engineer who had to rebuild understanding from scratch in a completely different tool. The problem main problem was a lack of coherence across automation functionality.

The Strategy

I started by interviewing engineers about how they actually worked. What surfaced was a consistent pattern: context dropped at handoffs, task switching introduced errors, and trust in automation broke down when the fix surface felt disconnected from the real system. That research became the design brief.

The core tension I held throughout: developers needed automation, but they also needed to feel in control. The system feels untrustworthy with too much autonomy. With too many manual gates, the experience negates the value of agentic tooling entirely.

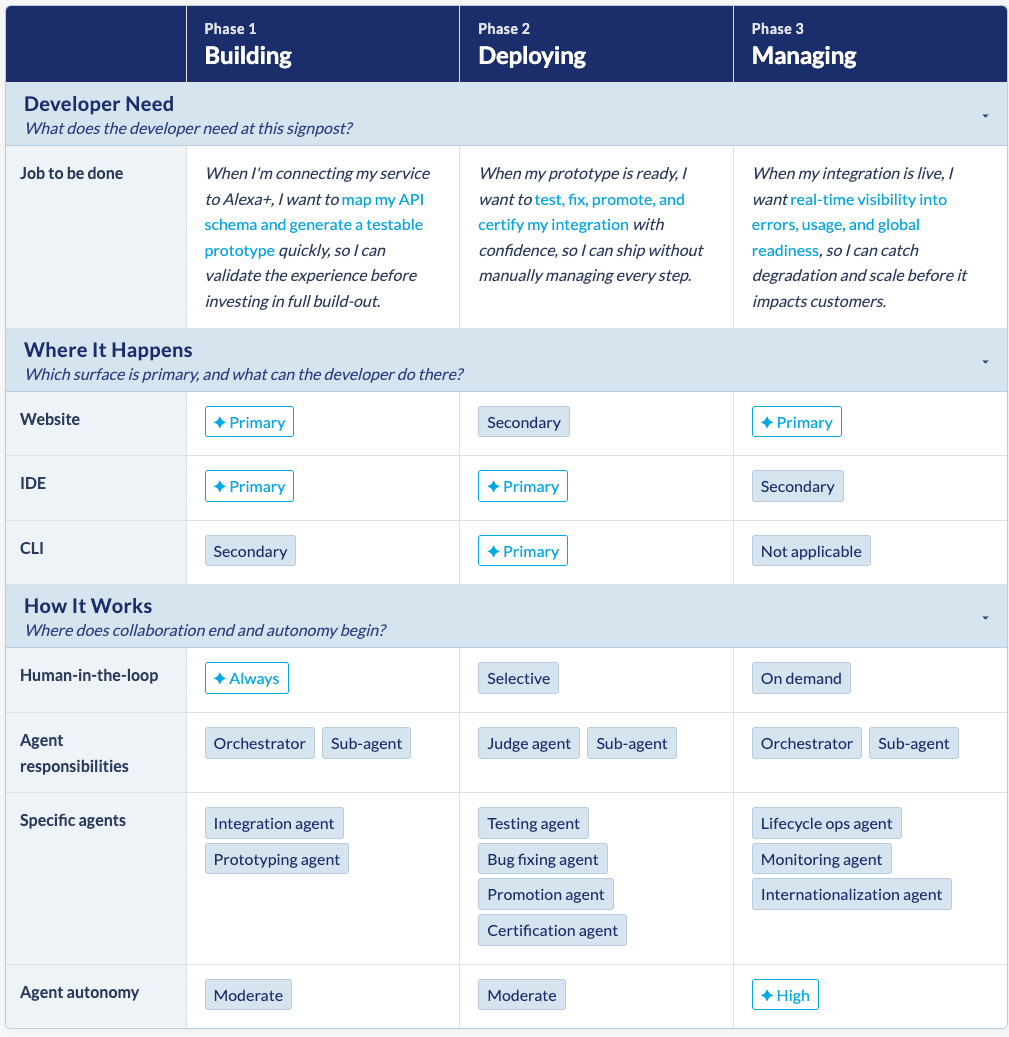

Rather than designing specialized UIs for each sub-agent, I reframed the problem: one trusted collaborator that persisted across surfaces and carries context with it. Natural language became the shared interaction model that manifested consistently across web, IDE, and CLI.

Orchestration example: The bug fixing journey

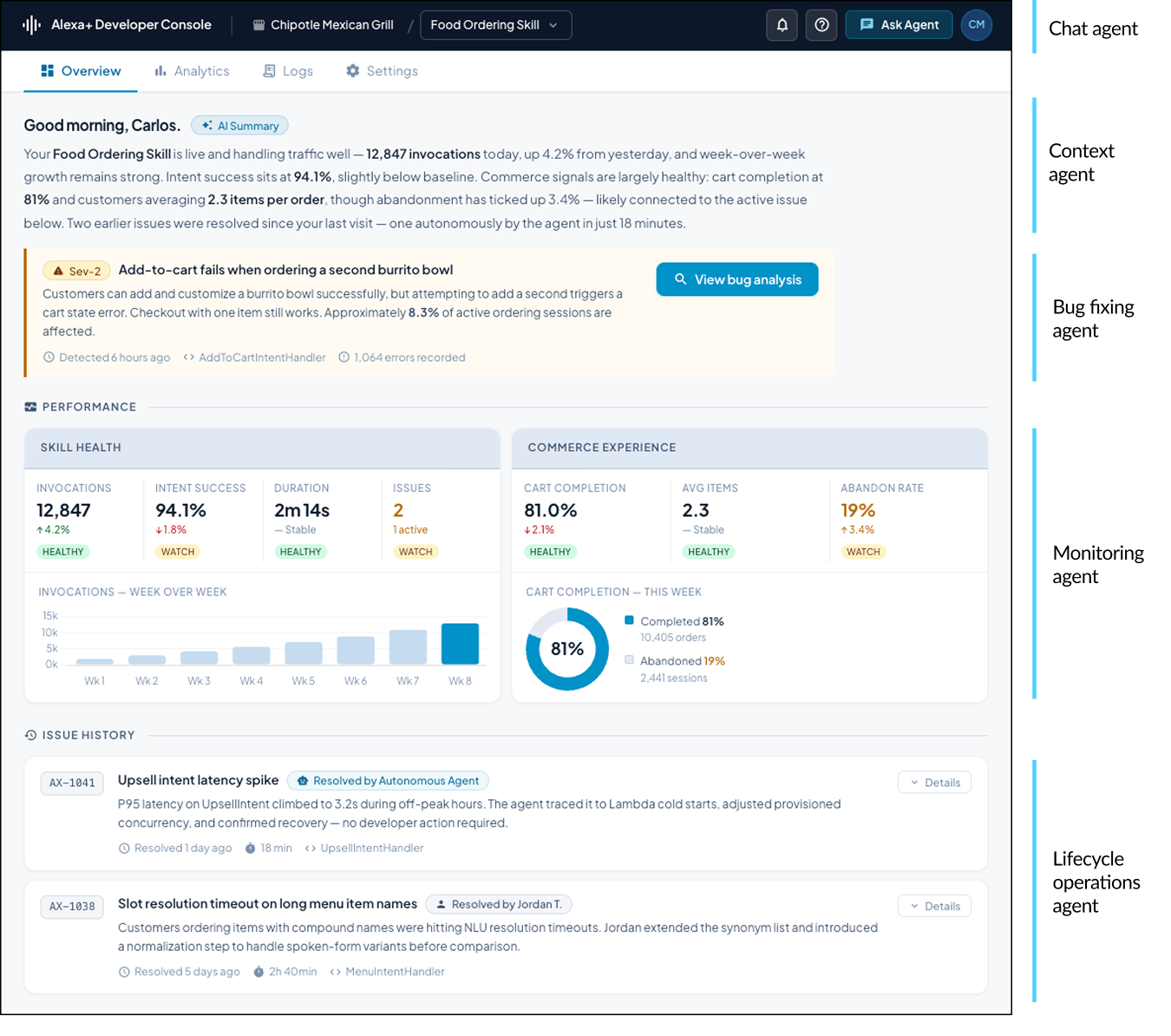

Screen 1: Overview

Goal: Understand the health of my skill and know what needs my attention today, without digging for it.

- Leading with a synthesized AI summary reduces cognitive load at the start of a session. Rather than requiring the developer to scan multiple data sources, the agent surfaces the most important read upfront. This follows progressive disclosure: give people the conclusion first, let them drill in if they need more.

- Separating an active incident from trend data respects the difference between urgent and important. Mixing a Sev-2 into the same visual register as weekly charts creates false equivalence. A distinct amber treatment with a left border applies pre-attentive processing so the eye finds it before the brain has to work.

- Splitting commerce and skill health into distinct panels reflects a core IA principle: organize by audience, not by data type. A shared panel with mixed signals forces everyone to filter out what doesn’t apply to them.

- Showing resolver attribution on past issues addresses a known adoption barrier for AI tools: trust. People are more willing to delegate to a system when they have visibility into its track record. The history panel does that work passively.

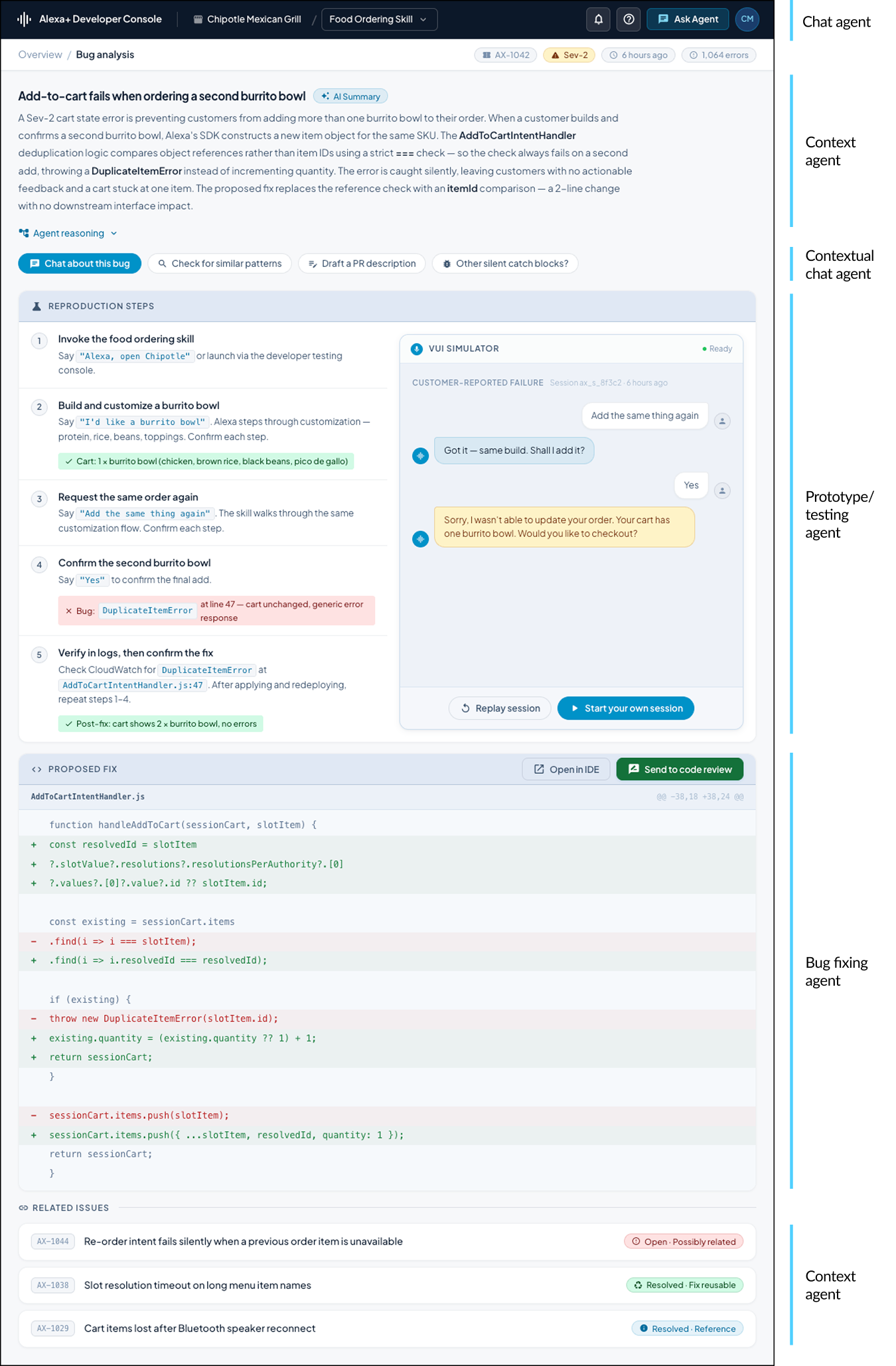

Screen 2: Bug Analysis

Goal: Understand what broke, reproduce it, and get to a fix I can act on — without having to piece it together myself.

- Ordering the page to match the natural debugging workflow (understand, reproduce, diagnose, fix) reduces task-switching and working memory load. When the interface reflects the mental model of the task, developers spend less effort navigating and more effort solving.

- Making agent reasoning collapsible respects the difference between novice and expert users. Developers who trust the agent skip it. Developers who need to verify it access it in one step. Surfacing it by default would add noise for the majority to serve the minority.

- Placing “Chat about this bug” at the front of the suggestion row signals that conversation is the primary affordance, not a fallback. In AI-native interfaces, the ordering of actions shapes how users understand the tool. Leading with chat positions the agent as a collaborator rather than a report generator.

- Defaulting the VUI simulator to the customer-reported failure rather than an empty state gives the developer immediate context before they begin reproducing. This applies recognition over recall: seeing the broken experience is faster and more reliable than constructing it from a description alone.

- Showing a detailed code diff rather than a minimal patch makes the fix reviewable, not just applicable. Showing surrounding context, including ID extraction from the SDK’s slot resolution chain, lets the developer evaluate the reasoning behind the change rather than trusting it blindly.

- Stripping related issues down to title and badge only applies appropriate detail at the right moment. By the time the developer reaches this section they have a fix in hand. The purpose of related issues is ambient awareness, not active investigation, so body copy would compete with the primary task.

Key Decisions

- Where orchestration lived: Surface support was prioritized based on real software lifecycle development workflows. The web dashboard served as the aggregation and assignment layer. The IDE extension was integrated into the tool where active development work happened. CLI was scoped as an interim solution before the orchestrator agent was ready. Meeting builders where they were was a core principle that enabled the system to earn trust.

- What context traveled: Not everything moved between surfaces. Context handoffs were intentionally scoped by role and recipient. What a PM needed at handoff was different from what an engineer needed to pick the work up. That distinction shaped both the data model and the interaction design.

- What I pushed back on: I advocated against fragmented, agent-specific dashboards and pushed for natural language as a platform-level capability, not a feature added to individual tools. Both required sustained alignment work across product and engineering.

The Outcomes

- Influenced roadmap and platform direction across web, IDE, and CLI

- Established natural language as a shared interaction model across the developer platform

- Provided a reusable UX framework for onboarding new agent capabilities

- Unblocked early product and technical decisions that had stalled without a shared mental model

- Set the foundation for human-in-the-loop moderation patterns across autonomous and assisted interactions